I am a second-year PhD student in UC Berkeley, advised by Prof.Kurt Keutzer. My research interest is about efficient training and inference of large language models and diffusion models. I graduate from Yao Class, Tsinghua University, led by Prof.Andrew Yao.

I work closely with Prof.Song Han in MIT. Back in Tsinghua University, I was fortunate to be advised by Prof.Jianfei Chen and Prof.Jun Zhu. I was also fortunate to be advised by Prof.Sheng Wang in University of Washington.

May 2025 - Aug 2025

San Jose, CA, USA

Research internship at NVIDIA, advised by Prof.Song Han (MIT Han Lab).

May 2025 - Aug 2025

Feb 2024 - Aug 2024

Beijing, China

Conduct Research about FP8 Training for Large Language Models. Advised by Prof.Song Han.

Feb 2024 - Aug 2024

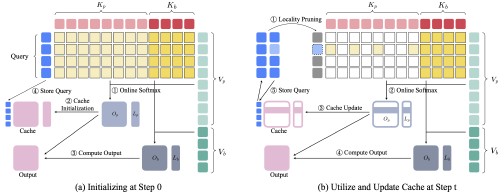

We propose LoSA, a locality-aware sparse attention for block-wise diffusion language models. LoSA reuses cached prefix-attention for stable tokens and applies sparse attention only to active tokens, achieving up to +9 accuracy points at aggressive sparsity, 1.54x lower attention density, and 4.14x attention speedup.

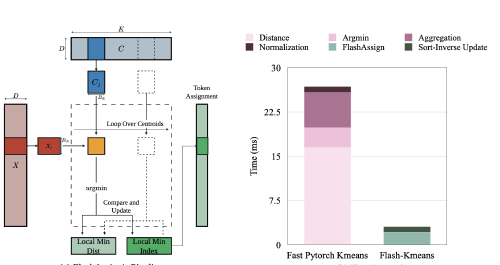

We propose Flash-KMeans, an IO-aware and contention-free k-means for modern GPUs. FlashAssign fuses distance computation with online argmin; Sort-Inverse Update transforms atomic scatters into localized reductions. Achieves up to 17.9x end-to-end speedup, 33x over cuML, and 200x+ over FAISS on H200 GPUs.

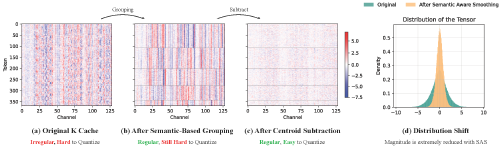

We present Quant VideoGen (QVG), a training-free KV cache quantization framework for autoregressive video diffusion with semantic-aware smoothing and progressive residual quantization. Reduces KV cache memory by up to 7× with under 4% end-to-end latency overhead while improving quality over baselines.

We unify FP8 precision across RL training and rollout to avoid off-policy numerical mismatch from BF16-train + FP8-rollout, which can destabilize long-horizon RL. Achieves up to 33% rollout speedup, 41% training speedup, and 16% end-to-end speedup over BF16 with stable convergence.

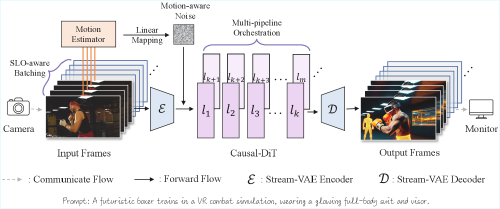

We present StreamDiffusionV2, a training-free streaming system that adapts video diffusion models for interactive, low-latency live generation. It integrates an SLO-aware batching scheduler, sink-token-guided rolling KV cache, motion-aware noise controller, and scalable pipeline orchestration across denoising steps and network layers. Achieves 0.5s time-to-first-frame and up to 58.28 FPS (14B) / 64.52 FPS (1.3B) on 4xH100 without TensorRT or quantization.

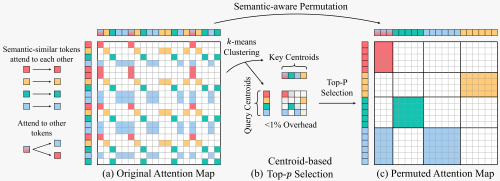

We propose a training-free sparse attention framework that uses semantic-aware permutation - clustering and reordering tokens via k-means based on semantic similarity - to achieve a new pareto-frontier in speed-quality tradeoff.

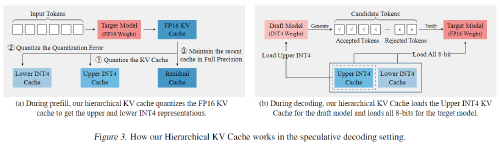

We propose a self-speculative decoding framework, QuantSpec, to speedup long-context inference. QuantSpec maintains high acceptance rates (>90%) and reliably provides consistent end-to-end speedups upto ∼ 2.5×.

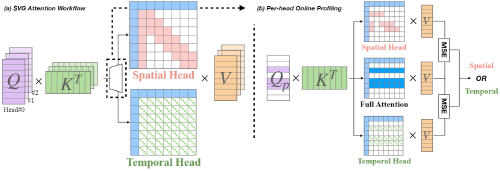

We identify the spatial head and temporal head pattern in attention map and propose to use sparse attention to accelerate. Achieves up to 2.28x and 2.33x end-to-end speedup on CogVideoX-v1.5 and HunyuanVideo.

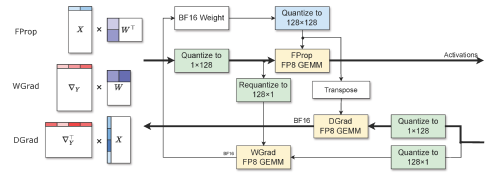

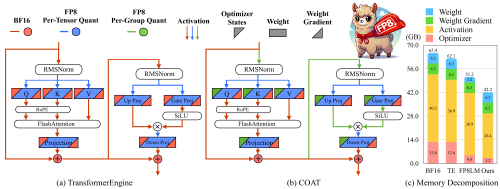

We propose Dynamic range expansion for FP8 optimizer, and propose FP8 precision flow for FP8 activations. Achieve Lossless performance, end-to-End 1.54x memory reduction and 1.43x training speedup over BF16.

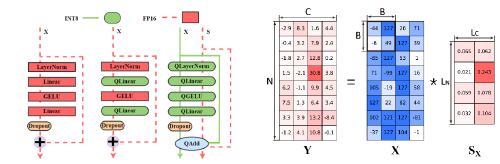

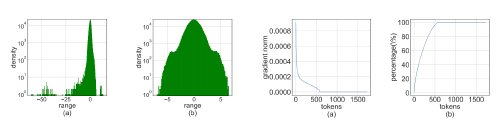

We propose a new method for efficient and accurate transformer pretraining with INT8 data flow and per-block quantization. Demonstrate effectiveness on GPT2-774M model. Achieve End-to-End 1.42x training speedup and 1.49x memory reduction.

Propose Hadamard Quantizer and Leverage Score Sampling to enable INT4 Precision Matmul in training for speedup. Both the forward and backward pass are quantized into INT4 precision for maximized speedup. Outperforms all existing 4-bit training baselines.

We propose LoSA, a locality-aware sparse attention for block-wise diffusion language models. LoSA reuses cached prefix-attention for stable tokens and applies sparse attention only to active tokens, achieving up to +9 accuracy points at aggressive sparsity, 1.54x lower attention density, and 4.14x attention speedup.

We propose Flash-KMeans, an IO-aware and contention-free k-means for modern GPUs. FlashAssign fuses distance computation with online argmin; Sort-Inverse Update transforms atomic scatters into localized reductions. Achieves up to 17.9x end-to-end speedup, 33x over cuML, and 200x+ over FAISS on H200 GPUs.

We present Quant VideoGen (QVG), a training-free KV cache quantization framework for autoregressive video diffusion with semantic-aware smoothing and progressive residual quantization. Reduces KV cache memory by up to 7× with under 4% end-to-end latency overhead while improving quality over baselines.

We unify FP8 precision across RL training and rollout to avoid off-policy numerical mismatch from BF16-train + FP8-rollout, which can destabilize long-horizon RL. Achieves up to 33% rollout speedup, 41% training speedup, and 16% end-to-end speedup over BF16 with stable convergence.

We present StreamDiffusionV2, a training-free streaming system that adapts video diffusion models for interactive, low-latency live generation. Combines SLO-aware batching, sink-token rolling KV cache, motion-aware noise control, and pipeline orchestration across denoising steps and network layers. Achieves 0.5s time-to-first-frame and up to 58.28 FPS (14B) / 64.52 FPS (1.3B) on 4xH100 without TensorRT or quantization.

We propose a training-free sparse attention framework that uses semantic-aware permutation - clustering and reordering tokens via k-means based on semantic similarity - to achieve a new pareto-frontier in speed-quality tradeoff.

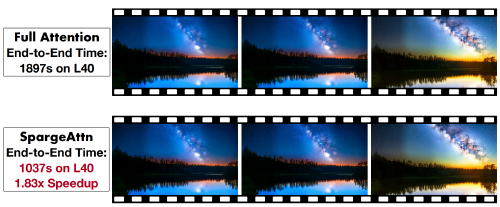

We propose SpargeAttn, a universal sparse and quantized attention for any model inference. Our method uses a two-stage online filter to select the most important tokens.

We propose a self-speculative decoding framework, QuantSpec, to speedup long-context inference. QuantSpec maintains high acceptance rates (>90%) and reliably provides consistent end-to-end speedups upto ∼ 2.5×.

We identify the spatial head and temporal head pattern in attention map and propose to use sparse attention to accelerate. Achieves up to 2.28x and 2.33x end-to-end speedup on CogVideoX-v1.5 and HunyuanVideo.

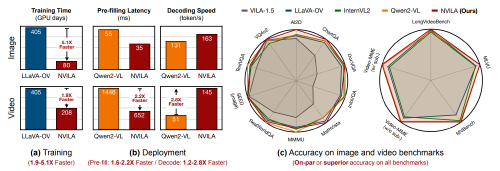

We propose a new frontier of visual language models, NVILA, to achieve reduces training costs by 4.5X, fine-tuning memory usage by 3.4X, pre-filling latency by 1.6-2.2X, and decoding latency by 1.2-2.8X.

We propose Dynamic range expansion for FP8 optimizer, and propose FP8 precision flow for FP8 activations. Achieve Lossless performance, end-to-End 1.54x memory reduction and 1.43x training speedup over BF16.

Propose to INT8 precision flow and per-block quantization to enable INT8 pretraining of transformers. Demonstrate effectiveness on GPT2-774M model. Achieve End-to-End 1.42x training speedup and 1.49x memory reduction.

Propose Hadamard Quantizer and Leverage Score Sampling to enable INT4 Precision Matmul in training for speedup. Both the forward and backward pass are quantized into INT4 precision for maximized speedup. Outperforms all existing 4-bit training baselines.